Building a Custom AI LLM for an Affiliate Marketing Company

Discover how GetDevDone fine-tuned Llama 3 for an established affiliate marketing agency.

- 2 min read

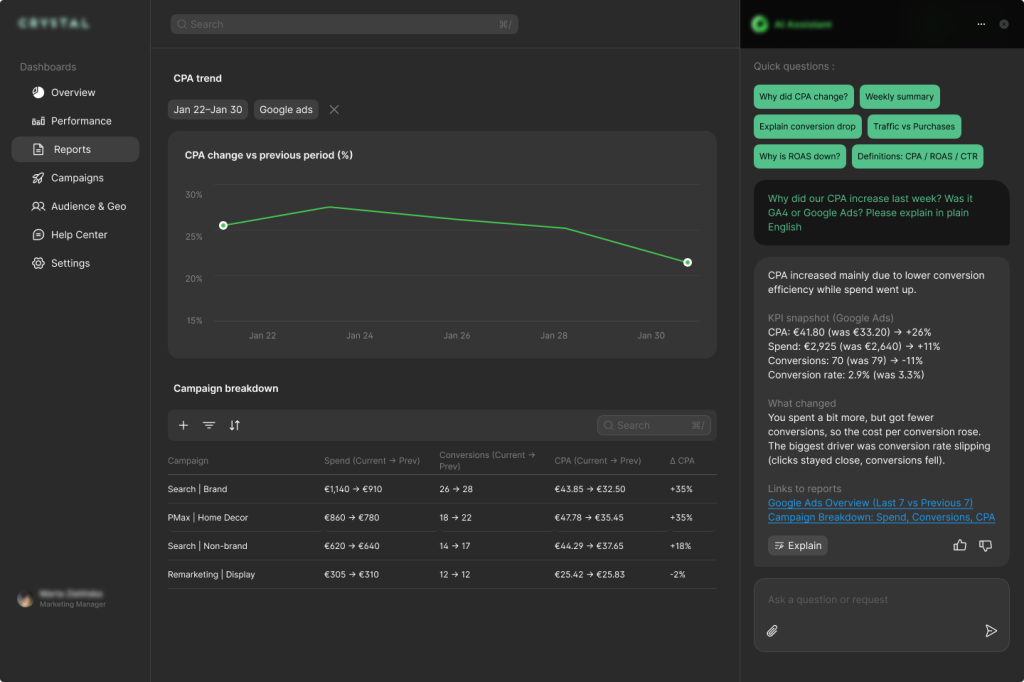

An AI reporting assistant connects to GA4 and Google Ads and turns client metrics into instant answers via a conversational interface. Using RAG, it explains KPI shifts, trends, and campaign results based on real data and agency standards, cutting reporting time, shortening calls, and boosting account manager productivity.

Our client, a digital marketing agency, runs performance campaigns for a cross-vertical clientele. They convert analytics into practical cognizance through clear reporting standards that keep client discussions grounded in the data.

Despite having dashboard access, the agency’s clients repeatedly asked the same questions each reporting cycle: why metrics changed, whether spikes or drops were expected, and how to interpret short-term fluctuations. Account managers spent time re-explaining identical KPIs across channels, making routine reporting unnecessarily resource-intensive.

The team needed a system that could interpret performance changes in real time without replacing human judgment. It had to ground every answer in actual GA4 and Google Ads data while following the agency’s established reporting playbook. The setup needed to let the team update definitions and explanations as reporting standards changed, without model retraining or engineering work, as well as keep each client’s data clearly separated.

To address these challenges, GetDevDone’s engineers built a client-facing chatbot that responds to performance-related questions using each client’s real metrics and the agency’s established reporting language. It operates on top of a normalized reporting layer that aligns the KPIs already used in client conversations.

The chatbot was set up to:

Each request is processed through a client-scoped data interface, so conversations only access the relevant account’s data.

When a question is asked, the system retrieves the relevant definitions, naming conventions, and explanation patterns from the agency’s reporting glossary and playbook. The response pipeline then combines this context with the underlying metrics to generate explanations that show what changed and how the numbers were derived.

GA4 and Google Ads were connected first, as they accounted for most recurring client questions, and both provide stable programmatic access through official APIs.

Within six weeks, the chatbot was live, handling the recurring performance questions that had been taking up account manager time. Routine metric clarification moved into the client portal, reducing reactive support and freeing the team to focus on more strategic work.

More structured client conversations

By resolving basic metric questions ahead of meetings, client discussions were grounded in a shared baseline. This allowed conversations to focus on interpretation, implications, and next steps rather than revalidating numbers.

Consistent explanations across accounts

Metric explanations followed the same documented reporting rules for every client, regardless of which account manager set up the account. This removed variations in how KPIs were explained and improved confidence in the figures and concepts being discussed.

More time for higher-value work

With routine explanations handled by AI, account managers can focus their expertise on core issues like analysis, planning, and strategic client engagement.

Discover how GetDevDone fine-tuned Llama 3 for an established affiliate marketing agency.

GetDevDone built an AI-powered knowledge assistant that turns the agency's internal document library into a searchable source of truth for the account team.

AI search engines like ChatGPT and Perplexity read intent, context, and structure, not just keywords. This 2025 guide gives content teams practical steps to format for AI, choose the right content types, refine on-page tactics, and tighten technical SEO so your answers are cited, trusted, and found.

A mid-sized U.S. apparel retailer ($18M revenue) was

stuck in the Black Friday trap: deep discounts, heavy

ad spend, short-lived spikes — but 72% checkout

abandonment and rising unsubscribe rates.